Meet Dr. Melya Boukheddimi

Senior Researcher in Robotics - DFKI Bremen

Please introduce yourself briefly and describe your current role at DFKI

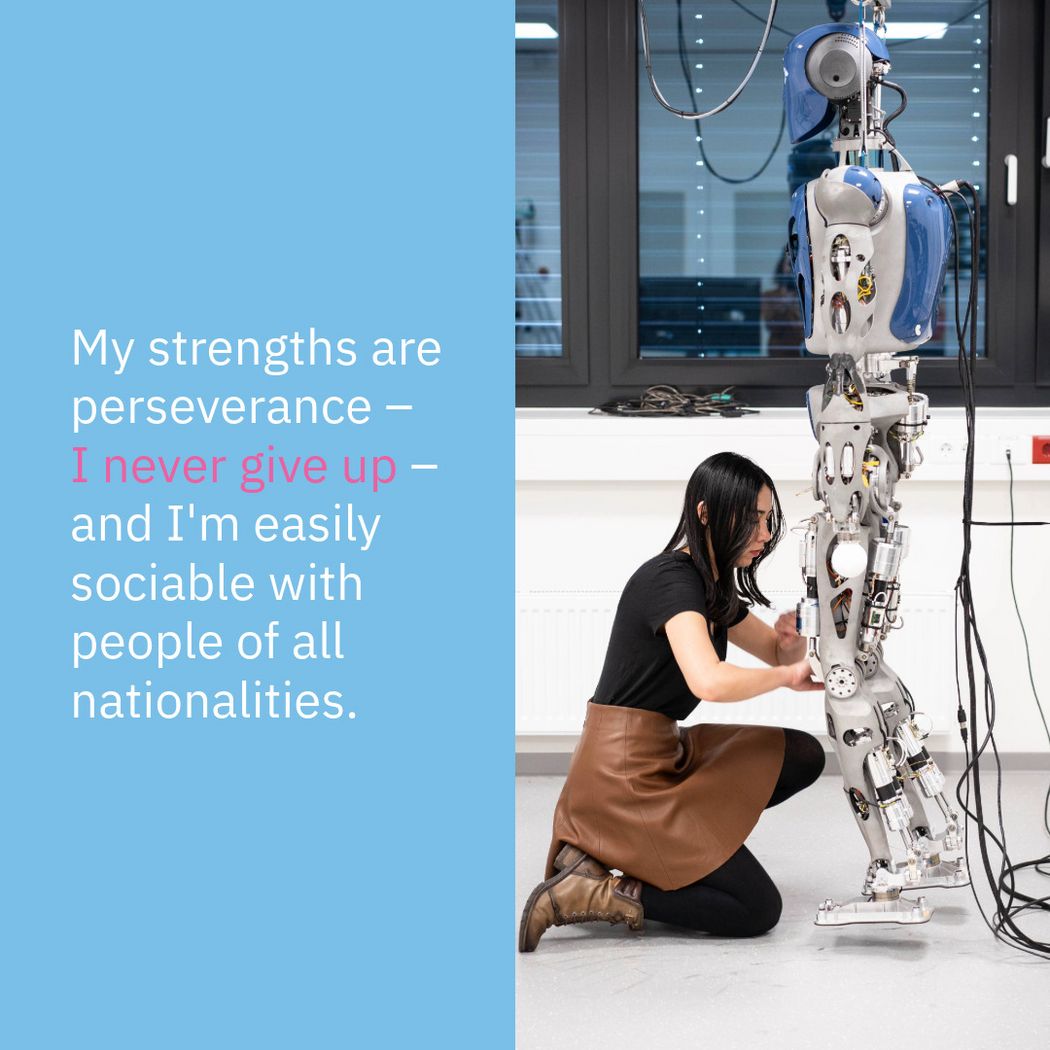

My name is Melya Boukheddimi, I'm from Algeria and I'm currently a senior researcher in robotics at the DFKI Bremen. I joined DFKI three years ago after completing my PhD in robotics at the Laboratory for Analysis and Architecture of Systems of the French National Centre for Scientific Research (LAAS-CNRS) in Toulouse.

In which of the 26 research departments do you work at DFKI?

I work at the DFKI Robotics Innovation Center in Bremen, in the mechanics and control team. Our research focuses on intelligent design of powerful robotic systems and proposing new control algorithms for generating highly dynamic movements.

What are you working on at the moment, or in other words, what are your plans for saving the world?

We are currently working on several projects involving humanoid robots and exoskeletons. The aim of these projects is to ultimately have robots that can perform tasks for humans, but also in collaboration with humans. We're also working on making our robots safe and anthropomorphic, in order to bring comfort and trust to humans.

What are your strengths and what has been your greatest success or favourite experience so far?

My strengths are perseverance, I never give up, and I'm easily sociable with people of all nationalities. I would say that my favourite experience so far has been working on the Dancing Robot paper, which is about a novel methodology for formalizing robot motion using musical dance. The teamwork was amazing, and the paper was selected as a finalist for the Best Entertainment Paper at the International Conference on Intelligent Robots and Systems (IROS) 2022 in Japan. We all really enjoyed the work.

What do you enjoy most about your job at DFKI? What inspires and fascinates you?

I love working with robots. At the DFKI Robotics Innovation Center, we're all lucky enough to work with several robots that our research institute has built, which means we can really test a wide range of algorithms on them without worrying too much about breaking them. Because they're built in-house, if they break down, we can repair them in our own workshops, which is really very rare in robotics research institutes.

If you weren't a scientist, what would you have become?

I think doing a PhD has always been a pretty obvious thing for me, so I'd say I would maybe teach or be an engineer in a company. I've also thought a lot about setting up my own start-up, and that's always an open possibility for the future.