The observation and analysis of strategic developments in the area surrounding the technology base of a company's products and services is a decisive competitive success factor in a globalized economy. Conventional tools to support this task, such as technology roadmapping or technology radars, are usually created and maintained by manually researching market-relevant data sources. With the rapidly accelerating, globally distributed R&D landscape with ever shorter development cycles and the resulting increase in data and information, this can only be achieved with a great deal of manual labor.

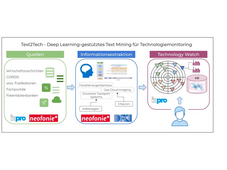

The goal of the Text2Tech project is the research and development of automated methods for information extraction from unstructured text sources in order to be able to provide companies with decision-relevant knowledge about technological developments quickly and efficiently. AI-based methods for information extraction (IE) already make it possible to extract selected information, e.g. B. to people, companies and places automatically from text sources. In the Text2Tech project, such approaches are to be further developed in order to extract machine-readable knowledge about technologies, technology categories, companies and their relationships with each other from German and English-language, domain-specific text sources, using the example of the automotive industry. The most important research goals are the modeling and "filling" of domain-specific knowledge graphs (Knowledge Base Population), the development of methods for cross-lingual proper name recognition and linking (Named Entity Recognition or Entity Linking), relation extraction (Relation Extraction), as well as the development of Model compression methods so that models run efficiently even on "small" hardware.

The DFKI is involved in the project with the SLT divisions. The focus of the work of SLT is the research of transfer learning approaches for information extraction, domain adaptation, as well as learning and model evaluation in scenarios with little data.

Partners

- Neofonie GmbH / OntoLux - inpro GmbH