MEDIUS

Multi-level coupled laser production technology with AI-based decision platform

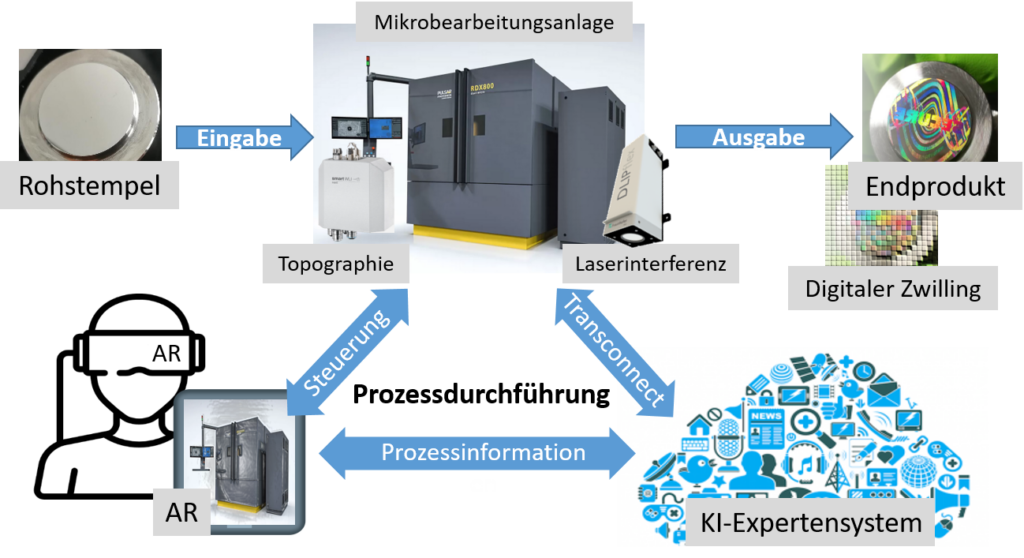

The MEDIUS project brings together an ambitious consortium from the fields of laser technology, artificial intelligence, human-machine interaction, data communication and surface metrology with the vision of coupling a disruptive laser manufacturing technology based on Direct Laser Interference supported by Augmented Reality (AR) with an AI-based learning expert platform.

The goal is to fully control laser process technology using AI-based intuitive human-machine interfaces in the context of a learning manufacturing platform for surface functionalization.

This will lead to

- significant simplification and improved transparency of both machine operation and usage as well as process control

- significant reduction in design and development time for functional surfaces by 50%-60%

- reduction in start-up time by eliminating daily calibration

- improved safety and stability of the manufacturing process by reducing the workload of the machine operator

- reduction in training time for new users

- improved machine and process reliability

The contribution of iQL is the measurement of the cognitive load of the operator, the detection of the current cognitive state and the automatic adaptation of the AR-generated input to the given states.

KAINE

Knowledge based learning platform with Artificial Intelligent structured content

The goal of the research project KAINE (Knowledge based learning platform with Artificial Intelligent structured content) is to better take into account the prior knowledge and experience of the participants in the learning process through AI, so that they can continue their education more efficiently.

Part-time continuing education is increasingly caught between the conflicting demands of family and work compatibility, ever shorter continuing education cycles, the imparting of increasingly complex knowledge, through more complex competence profiles from an interdisciplinary context, increasing demands on learning efficiency, the opening of continuing education for new educational biographies as well as a sustainable transfer of knowledge into everyday business life. The best possible resolution of this tension implies a mandate for research, development and testing of intelligent teaching-learning offers.

The research project KAINE comprises three aspects in the development field III, which complement each other and in interaction promise a great added value for the teaching-learning process in continuing vocational training. First, the algorithm-based individualization of the learning process, implemented by methods of artificial intelligence, and the learning journeys (curriculum) by using user and usage data for clustering (industry, prior knowledge, professional experience) in combination with learning analytics (learning habits).

The almost barrier-free accompaniment of the learning process by a voice or chatbot enables the adaptive support of the learning process independent of time and space. For learning status diagnostics, the learning process is compared with the learner model by means of a guided interview and, if necessary, individualized assistance is offered. In addition, individual learning needs are broadly addressed by specific supplementary material, which is made available through the algorithm-based structuring of unstructured learning materials.

The knowledge transfer of the learned new competencies into the company is supported by a dialog-oriented consulting system. Specific assistance is offered for the individual transfer and implementation phases. Regular surveys of former participants continuously improve the consulting process and increase the sustainability of continuing vocational training. The testing of the new learning assistance systems is carried out by the practical partners, among others, in the in-service continuing education course "Digital Transformation. Co-determine. Mitgestalten" for works councils.

InCoRAP

Intent-oriented cooperative robot action planning and worker support in factory settings

Flexible production in modern smart factories requires effective support of human workers and smooth cooperation with assisting robots. However, in a factory scenario where the worker performs manual tasks, the hands are often not free to interact with assistance systems. Voice commands are not practical either due to factory noise. Hence, to provide well-adapted and acceptablesupport, both a worker assistance systemand robotic action planningneed to anticipatethe worker’s future activities.

Existing approaches to worker assistance leverage comparatively coarse-grained information such as the worker’s trajectory in the environment. In contrast, we base any assistive functionality (including the actions of the robot) on high-level intentions.

To infer such high-level intentions, we regard sensor data in relation to the worker’s current task. This requires a comprehensive, detailed model of the factory environment, integrating information from a rich variety of sensors as well as sophisticated process information stemming, e.g., from an ERP system. The environment model must be accessible for various purposes and on different levels of interpretation and detail.

The detection of human intentionfrom available sensor data, the generation and maintenance of such a multi-sensor-based hierarchical semantic modelof the current environment, and their usage for action planning and situation-adequate assistancecomprise the R&D focus of the project.

HyperMind - The Anticipating Textbook

The anticipating physics textbook, which we are developing in the HyperMind project, is a dynamically adaptive personal textbook and enables individual learning. HyperMind starts at the micro level of the physics textbook, which contains the individual forms of presentation, so-called representations, of a textbook - such as the text of a textbook with a certain proportion of technical terms, formulas, diagrams or images.

The static structure of the classic book is dissolved. Instead, the book‘s content is portioned and the resulting knowledge modules are linked associatively. In addition, the modules are supplemented with multimedia learning content, which can be called up on the basis of attention (eye) data.

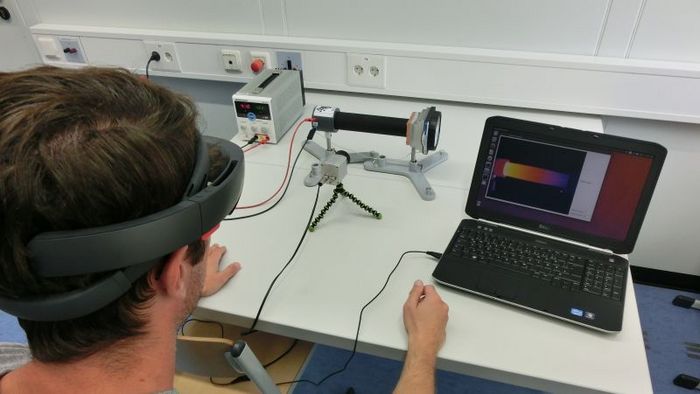

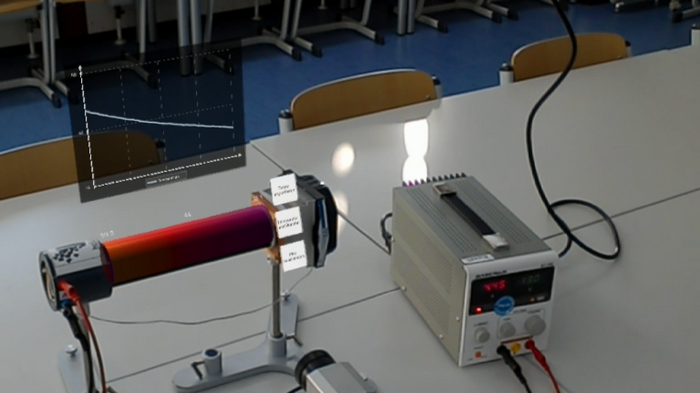

Be-greifen

The topic of this project is the exploration of innovative human-technology interactions (HTI), which, by merging the real and digital worlds (augmented reality), makes the connection between experiment and theory comprehensible, tangible and interactively explorable in real time for students of STEM subjects.

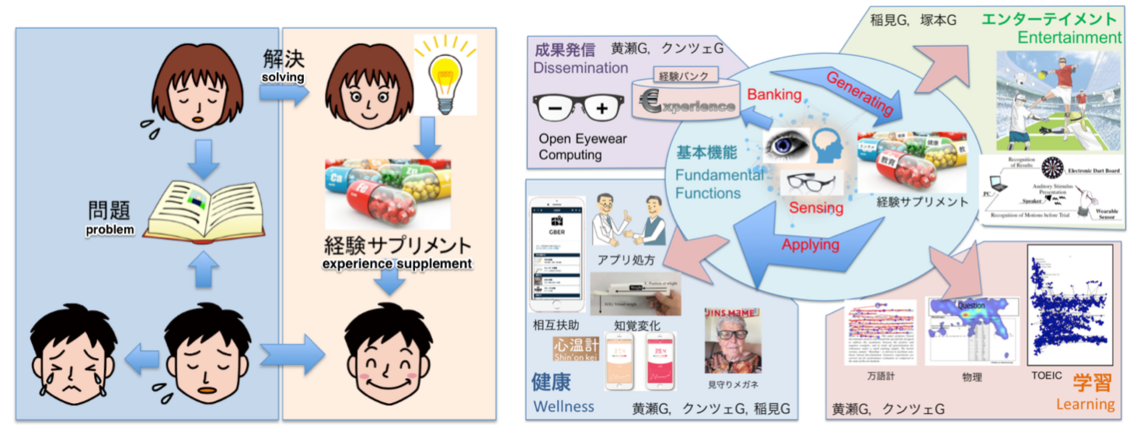

ESPEDUCA - JST CREST (in cooperation with the Osaka Prefecture University)

Knowledge can be shared via the Internet. However, most information is limited to "explicit knowledge" such as text and not "implicit knowledge" as how the experience of experiencing something oneself. The aim of this project is to record these experiences with the help of sensors and to make them available to others.

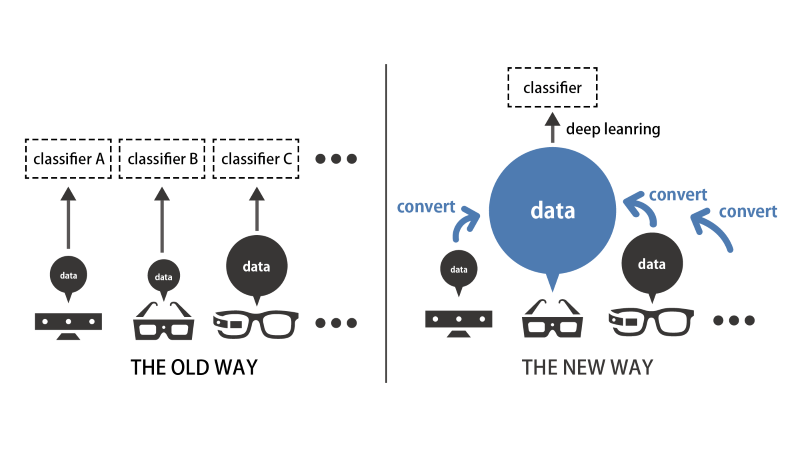

Eyetifact and GazeSynthesizer (in cooperation with the Osaka Prefecture University)

Deep learning technologies have significantly accelerated the processing of images, audio and speech. Activity detection has not yet benefited from this, as it is difficult to obtain a large amount of data for training the deep learning algorithm in this area. The more sensitive and complex the sensors are, the more difficult it is to obtain the required amount of data. With the help of Eyetifact, sensor data from different sources are combined to create a data set with the help of Deep Learning, so that other data can be predicted when individual sensors are subsequently used.

In order to improve the readability of texts, they must first be read by a large group of people, the eye movement recorded and evaluated. GazeSynthesizer generates a data set based on the measurements on a few pages and can simulate artificial eye movements with the help of Deep Learning to simulate the readability of unknown texts depending on parameters such as age or cultural background.

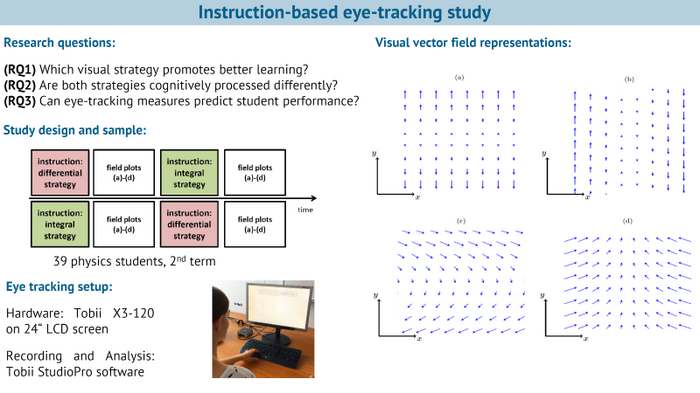

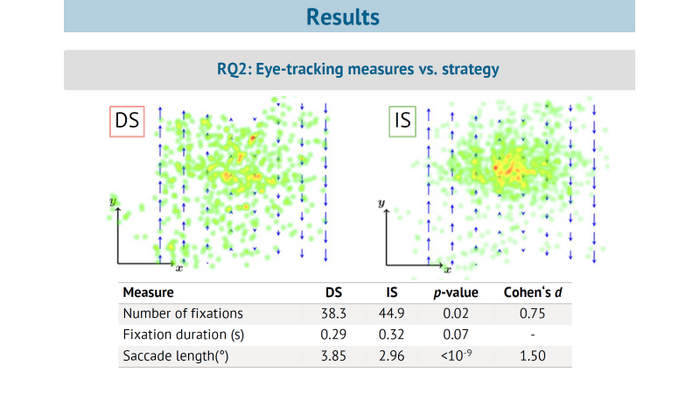

SUN - Analyzing student understanding of vector field plots with respect to divergence

Visual understanding of abstract mathematical concepts is crucial for scientific learning and a prerequisite for developing expertise. This project analyses the understanding of students when viewing vector fields using different visual strategies.

Contact

Dr. Nicolas Großmann

Phone: +49 631 20575 5304

nicolas.grossmann@dfki.de

Deutsches Forschungszentrum für Künstliche Intelligenz GmbH (DFKI)

Research Department Smart Data & Knowledge Services

Trippstadter Str. 122

67663 Kaiserslautern

Germany