"What will I see when I turn this corner?"

Depending on what is around that corner, this question is easy for us to answer. We are likely to see a refrigerator in a kitchen, ships at a port, and a streetlight behind a street corner. We have these expectations quite intuitively, based on the knowledge and experience we have about our surroundings. And these expectations help us make decisions day after day.

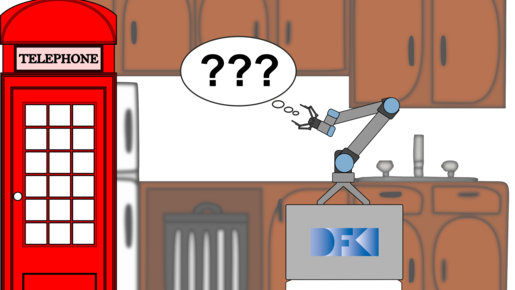

Even more, our expectations can help us perceive objects in our environment. In a kitchen, a box with a door is more likely to be a refrigerator than a phone booth.

Even autonomous robots can make limited use of knowledge about their environment. Such knowledge is often in symbolic form. Algorithms for planning or decision making, for example, can already draw on symbolic knowledge, but it is more difficult to use it to influence the "perception" of a robot.

In recent years, there has been tremendous progress in robot perception, especially in object recognition. Neural networks evaluate sensor data, such as images, and can identify a variety of different objects with great success. Currently, this is possible for various forms of sensor data; however, the incorporation of symbolic knowledge still poses a problem.

In the ExPrIS project, approaches are explored that integrate expectations about the environment into the object recognition of autonomous robots.

The challenge here is, on the one hand, to generate context-dependent expectations. It is more likely to see the telephone booth on the street than in the kitchen. On the other hand, these expectations should also be directly integrated into the learning and recognition process of neural networks.

This will enable a robot to actively use existing information and experience for its perception and thus positively influence object recognition. In combination with existing methods for decision making, this should create a possibility to make the behavior of autonomous robots more stable and reliable.

Prof. Dr. Martin Atzmüller

Prof. Dr. Martin Atzmüller